Feature: Pooling

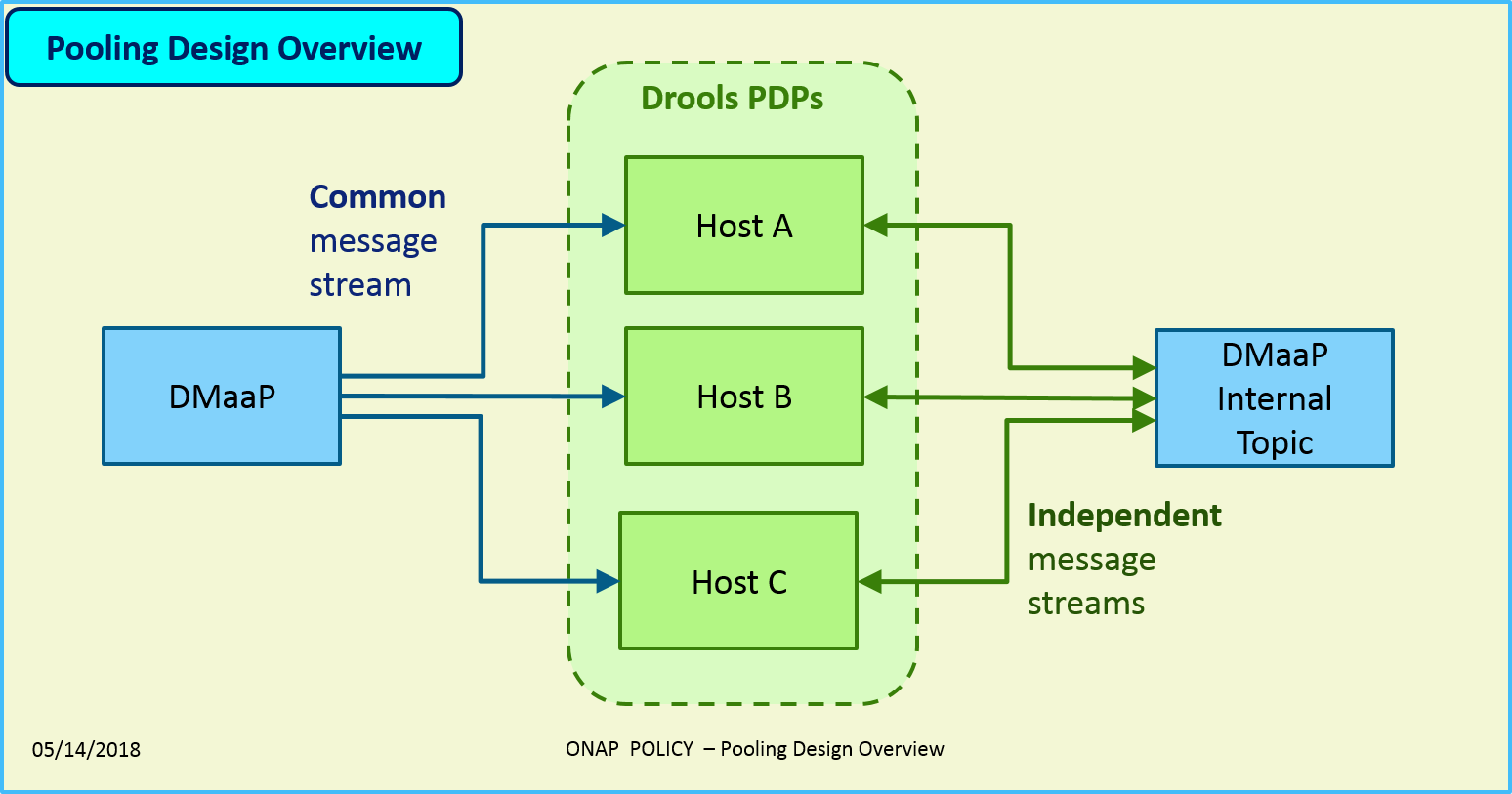

The Pooling feature provides the ability to load-balance work across a “pool” of active-active Drools-PDP hosts. This particular implementation uses a DMaaP topic for communication between the hosts within the pool.

- The pool is adjusted automatically, with no manual intervention when:

a new host is brought online

a host goes offline, whether gracefully or due to a failure in the host or in the network

Assumptions and Limitations

Session persistence is not required

Data may be lost when processing is moved from one host to another

The entire pool may shut down if the inter-host DMaaP topic becomes inaccessible

Key Points

- Requests are received on a common DMaaP topic

DMaaP distributes the requests randomly to the hosts

The request topic should have at least as many partitions as there are hosts

Uses a single, internal DMaaP topic for all inter-host communication

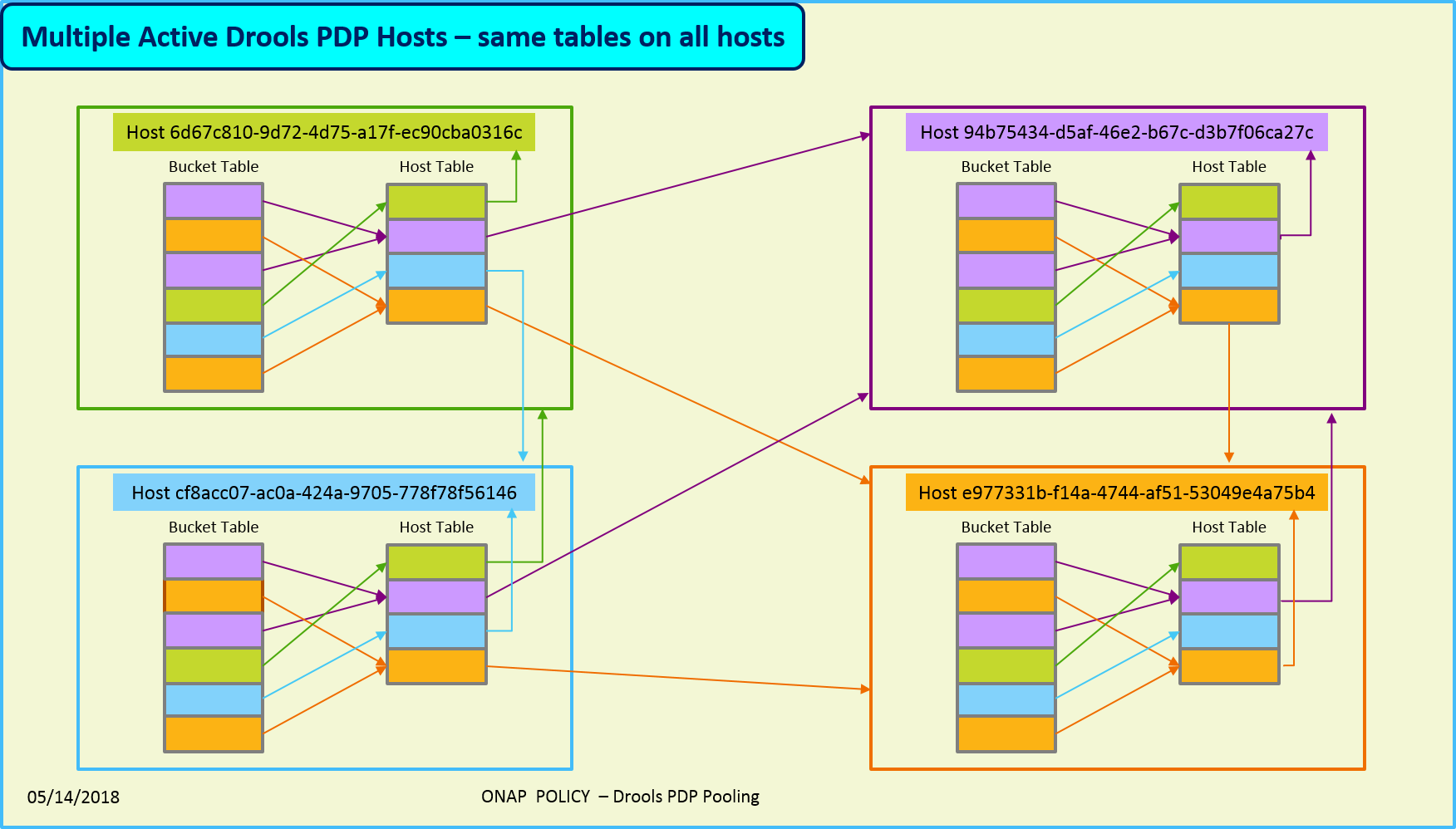

- Allocates buckets to each host

Requests are assigned to buckets based on their respective “request IDs”

No session persistence

No objects copied between hosts

Requires feature(s): distributed-locking

Precludes feature(s): session-persistence, active-standby, state-management

Example Scenario

Incoming DMaaP message is received on a topic — all hosts are listening, but only one random host receives the message

Decode message to determine “request ID” key (message-specific operation)

Hash request ID to determine the bucket number

Look up host associated with hash bucket (most likely remote)

Publish “forward” message to internal DMaaP topic, including remote host, bucket number, DMaaP topic information, and message body

Remote host verifies ownership of bucket, and routes the DMaaP message to its own rule engine for processing

The figure below shows several different hosts in a pool. Each host has a copy of the bucket assignments, which specifies which buckets are assigned to which hosts. Incoming requests are mapped to a bucket, and a bucket is mapped to a host, to which the request is routed. The host table includes an entry for each active host in the pool, to which one or more buckets are mapped.

Bucket Reassignment

When a host goes up or down, buckets are rebalanced

Attempts to maintain an even distribution

Leaves buckets with their current owner, where possible

Takes a few buckets from each host to assign to new hosts

For example, in the diagram below, the left side shows how 32 buckets might be assigned among four different hosts. When the first host fails, the buckets from host 1 would be reassigned among the remaining hosts, similar to what is shown on the right side of the diagram. Any requests that were being processed by host 1 will be lost and must be restarted. However, the buckets that had already been assigned to the remaining hosts are unchanged, thus requests associated with those buckets are not impacted by the loss of host 1.

Usage

For pooling to be enabled, the distributed-locking feature must be also be enabled.

Enable Feature Poolingpolicy stop features enable distributed-locking features enable pooling-dmaapThe configuration is located at:

$POLICY_HOME/config/feature-pooling-dmaap.properties

Start the PDP-D using poolingpolicy startDisable the pooling featurepolicy stop features disable pooling-dmaap policy start

End of Document