OOM User Guide

The ONAP Operations Manager (OOM) provide the ability to manage the entire life-cycle of an ONAP installation, from the initial deployment to final decommissioning. This guide provides instructions for users of ONAP to use the Kubernetes/Helm system as a complete ONAP management system.

This guide provides many examples of Helm command line operations. For a complete description of these commands please refer to the Helm Documentation.

The following sections describe the life-cycle operations:

Deploy - with built-in component dependency management

Configure - unified configuration across all ONAP components

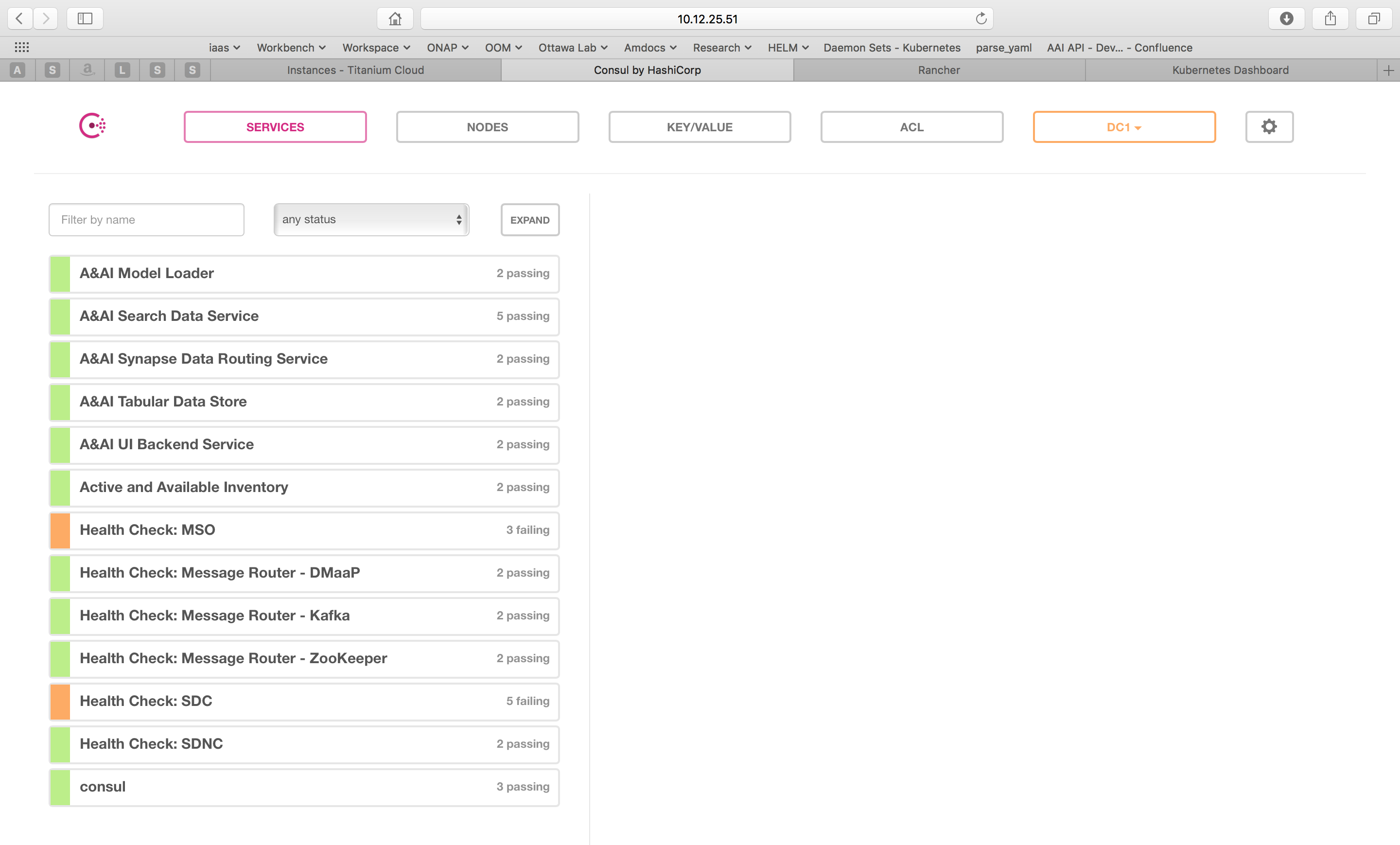

Monitor - real-time health monitoring feeding to a Consul UI and Kubernetes

Heal- failed ONAP containers are recreated automatically

Scale - cluster ONAP services to enable seamless scaling

Upgrade - change-out containers or configuration with little or no service impact

Delete - cleanup individual containers or entire deployments

Deploy

The OOM team with assistance from the ONAP project teams, have built a comprehensive set of Helm charts, yaml files very similar to TOSCA files, that describe the composition of each of the ONAP components and the relationship within and between components. Using this model Helm is able to deploy all of ONAP with a few simple commands.

Pre-requisites

Your environment must have the Kubernetes kubectl with Strimzi Apache Kafka, Cert-Manager and Helm setup as a one time activity.

Install Kubectl

Enter the following to install kubectl (on Ubuntu, there are slight differences on other O/Ss), the Kubernetes command line interface used to manage a Kubernetes cluster:

> curl -LO https://storage.googleapis.com/kubernetes-release/release/v1.19.11/bin/linux/amd64/kubectl

> chmod +x ./kubectl

> sudo mv ./kubectl /usr/local/bin/kubectl

> mkdir ~/.kube

Paste kubectl config from Rancher (see the OOM Cloud Setup Guide for alternative Kubernetes environment setups) into the ~/.kube/config file.

Verify that the Kubernetes config is correct:

> kubectl get pods --all-namespaces

At this point you should see Kubernetes pods running.

Install Helm

Helm is used by OOM for package and configuration management. To install Helm, enter the following:

> wget https://get.helm.sh/helm-v3.6.3-linux-amd64.tar.gz

> tar -zxvf helm-v3.6.3-linux-amd64.tar.gz

> sudo mv linux-amd64/helm /usr/local/bin/helm

Verify the Helm version with:

> helm version

Install Strimzi Apache Kafka Operator

Details on how to install Strimzi Apache Kafka can be found here.

Install Cert-Manager

Details on how to install Cert-Manager can be found here.

Install the Helm Repo

Once kubectl and Helm are setup, one needs to setup a local Helm server to server up the ONAP charts:

> helm install osn/onap

Note

The osn repo is not currently available so creation of a local repository is required.

Helm is able to use charts served up from a repository and comes setup with a default CNCF provided Curated applications for Kubernetes repository called stable which should be removed to avoid confusion:

> helm repo remove stable

To prepare your system for an installation of ONAP, you’ll need to:

> git clone -b jakarta --recurse-submodules -j2 http://gerrit.onap.org/r/oom

> cd oom/kubernetes

To install a local Helm server:

> curl -LO https://s3.amazonaws.com/chartmuseum/release/latest/bin/linux/amd64/chartmuseum

> chmod +x ./chartmuseum

> mv ./chartmuseum /usr/local/bin

To setup a local Helm server to server up the ONAP charts:

> mkdir -p ~/helm3-storage

> chartmuseum --storage local --storage-local-rootdir ~/helm3-storage -port 8879 &

Note the port number that is listed and use it in the Helm repo add as follows:

> helm repo add local http://127.0.0.1:8879

To get a list of all of the available Helm chart repositories:

> helm repo list

NAME URL

local http://127.0.0.1:8879

Then build your local Helm repository:

> make SKIP_LINT=TRUE [HELM_BIN=<HELM_PATH>] all

- HELM_BIN

Sets the helm binary to be used. The default value use helm from PATH

The Helm search command reads through all of the repositories configured on the system, and looks for matches:

> helm search repo local

NAME VERSION DESCRIPTION

local/appc 10.0.0 Application Controller

local/clamp 10.0.0 ONAP Clamp

local/common 10.0.0 Common templates for inclusion in other charts

local/onap 10.0.0 Open Network Automation Platform (ONAP)

local/robot 10.0.0 A helm Chart for kubernetes-ONAP Robot

local/so 10.0.0 ONAP Service Orchestrator

In any case, setup of the Helm repository is a one time activity.

Next, install Helm Plugins required to deploy the ONAP release:

> cp -R ~/oom/kubernetes/helm/plugins/ ~/.local/share/helm/plugins

Once the repo is setup, installation of ONAP can be done with a single command:

> helm deploy development local/onap --namespace onap --set global.masterPassword=password

This will install ONAP from a local repository in a ‘development’ Helm release. As described below, to override the default configuration values provided by OOM, an environment file can be provided on the command line as follows:

> helm deploy development local/onap --namespace onap -f overrides.yaml --set global.masterPassword=password

Note

Refer the Configure section on how to update overrides.yaml and values.yaml

To get a summary of the status of all of the pods (containers) running in your deployment:

> kubectl get pods --namespace onap -o=wide

Note

The Kubernetes namespace concept allows for multiple instances of a component (such as all of ONAP) to co-exist with other components in the same Kubernetes cluster by isolating them entirely. Namespaces share only the hosts that form the cluster thus providing isolation between production and development systems as an example.

Note

The Helm –name option refers to a release name and not a Kubernetes namespace.

To install a specific version of a single ONAP component (so in this example) with the given release name enter:

> helm deploy so onap/so --version 10.0.0 --set global.masterPassword=password --set global.flavor=unlimited --namespace onap

Note

The dependent components should be installed for component being installed

To display details of a specific resource or group of resources type:

> kubectl describe pod so-1071802958-6twbl

where the pod identifier refers to the auto-generated pod identifier.

Configure

Each project within ONAP has its own configuration data generally consisting of: environment variables, configuration files, and database initial values. Many technologies are used across the projects resulting in significant operational complexity and an inability to apply global parameters across the entire ONAP deployment. OOM solves this problem by introducing a common configuration technology, Helm charts, that provide a hierarchical configuration with the ability to override values with higher level charts or command line options.

The structure of the configuration of ONAP is shown in the following diagram. Note that key/value pairs of a parent will always take precedence over those of a child. Also note that values set on the command line have the highest precedence of all.

![digraph config {

{

node [shape=folder]

oValues [label="values.yaml"]

demo [label="onap-demo.yaml"]

prod [label="onap-production.yaml"]

oReq [label="Chart.yaml"]

soValues [label="values.yaml"]

soReq [label="Chart.yaml"]

mdValues [label="values.yaml"]

}

{

oResources [label="resources"]

}

onap -> oResources

onap -> oValues

oResources -> environments

oResources -> oReq

oReq -> so

environments -> demo

environments -> prod

so -> soValues

so -> soReq

so -> charts

charts -> mariadb

mariadb -> mdValues

}](_images/graphviz-7942e393611f8264b19725fc42aa660d2a53e5e3.png)

The top level onap/values.yaml file contains the values required to be set before deploying ONAP. Here is the contents of this file:

# Copyright © 2019 Amdocs, Bell Canada

# Copyright (c) 2020 Nordix Foundation, Modifications

# Modifications Copyright © 2020-2021 Nokia

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#################################################################

# Global configuration overrides.

#

# These overrides will affect all helm charts (ie. applications)

# that are listed below and are 'enabled'.

#################################################################

global:

# Change to an unused port prefix range to prevent port conflicts

# with other instances running within the same k8s cluster

nodePortPrefix: 302

nodePortPrefixExt: 304

# Install test components

# test components are out of the scope of ONAP but allow to have a entire

# environment to test the different features of ONAP

# Current tests environments provided:

# - netbox (needed for CDS IPAM)

# - AWX (needed for XXX)

# - EJBCA Server (needed for CMPv2 tests)

# Today, "contrib" chart that hosting these components must also be enabled

# in order to make it work. So `contrib.enabled` must have the same value than

# addTestingComponents

addTestingComponents: &testing false

# ONAP Repository

# Four different repositories are used

# You can change individually these repositories to ones that will serve the

# right images. If credentials are needed for one of them, see below.

repository: nexus3.onap.org:10001

dockerHubRepository: &dockerHubRepository docker.io

elasticRepository: &elasticRepository docker.elastic.co

googleK8sRepository: k8s.gcr.io

githubContainerRegistry: ghcr.io

#/!\ DEPRECATED /!\

# Legacy repositories which will be removed at the end of migration.

# Please don't use

loggingRepository: *elasticRepository

busyboxRepository: *dockerHubRepository

# Default credentials

# they're optional. If the target repository doesn't need them, comment them

repositoryCred:

user: docker

password: docker

# If you want / need authentication on the repositories, please set

# Don't set them if the target repo is the same than others

# so id you've set repository to value `my.private.repo` and same for

# dockerHubRepository, you'll have to configure only repository (exclusive) OR

# dockerHubCred.

# dockerHubCred:

# user: myuser

# password: mypassord

# elasticCred:

# user: myuser

# password: mypassord

# googleK8sCred:

# user: myuser

# password: mypassord

# common global images

# Busybox for simple shell manipulation

busyboxImage: busybox:1.34.1

# curl image

curlImage: curlimages/curl:7.80.0

# env substitution image

envsubstImage: dibi/envsubst:1

# generate htpasswd files image

# there's only latest image for htpasswd

htpasswdImage: xmartlabs/htpasswd:latest

# kubenretes client image

kubectlImage: bitnami/kubectl:1.22.4

# logging agent

loggingImage: beats/filebeat:5.5.0

# mariadb client image

mariadbImage: bitnami/mariadb:10.5.8

# nginx server image

nginxImage: bitnami/nginx:1.21.4

# postgreSQL client and server image

postgresImage: crunchydata/crunchy-postgres:centos8-13.2-4.6.1

# readiness check image

readinessImage: onap/oom/readiness:3.0.1

# image pull policy

pullPolicy: Always

# default java image

jreImage: onap/integration-java11:10.0.0

# default clusterName

# {{ template "common.fullname" . }}.{{ template "common.namespace" . }}.svc.{{ .Values.global.clusterName }}

clusterName: cluster.local

# default mount path root directory referenced

# by persistent volumes and log files

persistence:

mountPath: /dockerdata-nfs

enableDefaultStorageclass: false

parameters: {}

storageclassProvisioner: kubernetes.io/no-provisioner

volumeReclaimPolicy: Retain

# override default resource limit flavor for all charts

flavor: unlimited

# flag to enable debugging - application support required

debugEnabled: false

# default password complexity

# available options: phrase, name, pin, basic, short, medium, long, maximum security

# More datails: https://www.masterpasswordapp.com/masterpassword-algorithm.pdf

passwordStrength: long

# configuration to set log level to all components (the one that are using

# "common.log.level" to set this)

# can be overrided per components by setting logConfiguration.logLevelOverride

# to the desired value

# logLevel: DEBUG

# Global ingress configuration

ingress:

enabled: false

virtualhost:

baseurl: "simpledemo.onap.org"

# Global Service Mesh configuration

# POC Mode, don't use it in production

serviceMesh:

enabled: false

tls: true

# be aware that linkerd is not well tested

engine: "istio" # valid value: istio or linkerd

# metrics part

# If enabled, exporters (for prometheus) will be deployed

# if custom resources set to yes, CRD from prometheus operartor will be

# created

# Not all components have it enabled.

#

metrics:

enabled: true

custom_resources: false

# Disabling AAF

# POC Mode, only for use in development environment

# Keep it enabled in production

aafEnabled: true

aafAgentImage: onap/aaf/aaf_agent:2.1.20

# Disabling MSB

# POC Mode, only for use in development environment

msbEnabled: true

# default values for certificates

certificate:

default:

renewBefore: 720h #30 days

duration: 8760h #365 days

subject:

organization: "Linux-Foundation"

country: "US"

locality: "San-Francisco"

province: "California"

organizationalUnit: "ONAP"

issuer:

group: certmanager.onap.org

kind: CMPv2Issuer

name: cmpv2-issuer-onap

# Enabling CMPv2

cmpv2Enabled: true

platform:

certificates:

clientSecretName: oom-cert-service-client-tls-secret

keystoreKeyRef: keystore.jks

truststoreKeyRef: truststore.jks

keystorePasswordSecretName: oom-cert-service-certificates-password

keystorePasswordSecretKey: password

truststorePasswordSecretName: oom-cert-service-certificates-password

truststorePasswordSecretKey: password

# Indicates offline deployment build

# Set to true if you are rendering helm charts for offline deployment

# Otherwise keep it disabled

offlineDeploymentBuild: false

# TLS

# Set to false if you want to disable TLS for NodePorts. Be aware that this

# will loosen your security.

# if set this element will force or not tls even if serviceMesh.tls is set.

# tlsEnabled: false

# Logging

# Currently, centralized logging is not in best shape so it's disabled by

# default

centralizedLoggingEnabled: ¢ralizedLogging false

# Example of specific for the components where you want to disable TLS only for

# it:

# if set this element will force or not tls even if global.serviceMesh.tls and

# global.tlsEnabled is set otherwise.

# robot:

# tlsOverride: false

# Global storage configuration

# Set to "-" for default, or with the name of the storage class

# Please note that if you use AAF, CDS, SDC, Netbox or Robot, you need a

# storageclass with RWX capabilities (or set specific configuration for these

# components).

# persistence:

# storageClass: "-"

# Example of specific for the components which requires RWX:

# aaf:

# persistence:

# storageClassOverride: "My_RWX_Storage_Class"

# contrib:

# netbox:

# netbox-app:

# persistence:

# storageClassOverride: "My_RWX_Storage_Class"

# cds:

# cds-blueprints-processor:

# persistence:

# storageClassOverride: "My_RWX_Storage_Class"

# sdc:

# sdc-onboarding-be:

# persistence:

# storageClassOverride: "My_RWX_Storage_Class"

#################################################################

# Enable/disable and configure helm charts (ie. applications)

# to customize the ONAP deployment.

#################################################################

aaf:

enabled: false

aaf-sms:

cps:

# you must always set the same values as value set in cps.enabled

enabled: false

aai:

enabled: false

appc:

enabled: false

config:

openStackType: OpenStackProvider

openStackName: OpenStack

openStackKeyStoneUrl: http://localhost:8181/apidoc/explorer/index.html

openStackServiceTenantName: default

openStackDomain: default

openStackUserName: admin

openStackEncryptedPassword: admin

cassandra:

enabled: false

cds:

enabled: false

clamp:

enabled: false

cli:

enabled: false

consul:

enabled: false

# Today, "contrib" chart that hosting these components must also be enabled

# in order to make it work. So `contrib.enabled` must have the same value than

# addTestingComponents

contrib:

enabled: *testing

cps:

enabled: false

dcaegen2:

enabled: false

dcaegen2-services:

enabled: false

dcaemod:

enabled: false

holmes:

enabled: false

dmaap:

enabled: false

# Today, "logging" chart that perform the central part of logging must also be

# enabled in order to make it work. So `logging.enabled` must have the same

# value than centralizedLoggingEnabled

log:

enabled: *centralizedLogging

sniro-emulator:

enabled: false

oof:

enabled: false

mariadb-galera:

enabled: false

msb:

enabled: false

multicloud:

enabled: false

nbi:

enabled: false

config:

# openstack configuration

openStackRegion: "Yolo"

openStackVNFTenantId: "1234"

policy:

enabled: false

pomba:

enabled: false

portal:

enabled: false

robot:

enabled: false

config:

# openStackEncryptedPasswordHere should match the encrypted string used in SO and APPC and overridden per environment

openStackEncryptedPasswordHere: "c124921a3a0efbe579782cde8227681e"

sdc:

enabled: false

sdnc:

enabled: false

replicaCount: 1

mysql:

replicaCount: 1

so:

enabled: false

replicaCount: 1

liveness:

# necessary to disable liveness probe when setting breakpoints

# in debugger so K8s doesn't restart unresponsive container

enabled: false

# so server configuration

config:

# message router configuration

dmaapTopic: "AUTO"

# openstack configuration

openStackUserName: "vnf_user"

openStackRegion: "RegionOne"

openStackKeyStoneUrl: "http://1.2.3.4:5000"

openStackServiceTenantName: "service"

openStackEncryptedPasswordHere: "c124921a3a0efbe579782cde8227681e"

# in order to enable static password for so-monitoring uncomment:

# so-monitoring:

# server:

# monitoring:

# password: demo123456!

strimzi:

enabled: false

uui:

enabled: false

vfc:

enabled: false

vid:

enabled: false

vnfsdk:

enabled: false

modeling:

enabled: false

platform:

enabled: false

a1policymanagement:

enabled: false

cert-wrapper:

enabled: true

repository-wrapper:

enabled: true

roles-wrapper:

enabled: true

One may wish to create a value file that is specific to a given deployment such that it can be differentiated from other deployments. For example, a onap-development.yaml file may create a minimal environment for development while onap-production.yaml might describe a production deployment that operates independently of the developer version.

For example, if the production OpenStack instance was different from a developer’s instance, the onap-production.yaml file may contain a different value for the vnfDeployment/openstack/oam_network_cidr key as shown below.

nsPrefix: onap

nodePortPrefix: 302

apps: consul msb mso message-router sdnc vid robot portal policy appc aai

sdc dcaegen2 log cli multicloud clamp vnfsdk aaf kube2msb

dataRootDir: /dockerdata-nfs

# docker repositories

repository:

onap: nexus3.onap.org:10001

oom: oomk8s

aai: aaionap

filebeat: docker.elastic.co

image:

pullPolicy: Never

# vnf deployment environment

vnfDeployment:

openstack:

ubuntu_14_image: "Ubuntu_14.04.5_LTS"

public_net_id: "e8f51956-00dd-4425-af36-045716781ffc"

oam_network_id: "d4769dfb-c9e4-4f72-b3d6-1d18f4ac4ee6"

oam_subnet_id: "191f7580-acf6-4c2b-8ec0-ba7d99b3bc4e"

oam_network_cidr: "192.168.30.0/24"

<...>

To deploy ONAP with this environment file, enter:

> helm deploy local/onap -n onap -f onap/resources/environments/onap-production.yaml --set global.masterPassword=password

#################################################################

# Global configuration overrides.

#

# These overrides will affect all helm charts (ie. applications)

# that are listed below and are 'enabled'.

#################################################################

global:

# Change to an unused port prefix range to prevent port conflicts

# with other instances running within the same k8s cluster

nodePortPrefix: 302

# image repositories

repository: nexus3.onap.org:10001

repositorySecret: eyJuZXh1czMub25hcC5vcmc6MTAwMDEiOnsidXNlcm5hbWUiOiJkb2NrZXIiLCJwYXNzd29yZCI6ImRvY2tlciIsImVtYWlsIjoiQCIsImF1dGgiOiJaRzlqYTJWeU9tUnZZMnRsY2c9PSJ9fQ==

# readiness check

readinessImage: onap/oom/readiness:3.0.1

# logging agent

loggingRepository: docker.elastic.co

# image pull policy

pullPolicy: IfNotPresent

# override default mount path root directory

# referenced by persistent volumes and log files

persistence:

mountPath: /dockerdata

# flag to enable debugging - application support required

debugEnabled: true

#################################################################

# Enable/disable and configure helm charts (ie. applications)

# to customize the ONAP deployment.

#################################################################

aaf:

enabled: false

aai:

enabled: false

appc:

enabled: false

clamp:

enabled: true

cli:

enabled: false

consul: # Consul Health Check Monitoring

enabled: false

cps:

enabled: false

dcaegen2:

enabled: false

log:

enabled: false

message-router:

enabled: false

mock:

enabled: false

msb:

enabled: false

multicloud:

enabled: false

policy:

enabled: false

portal:

enabled: false

robot: # Robot Health Check

enabled: true

sdc:

enabled: false

sdnc:

enabled: false

so: # Service Orchestrator

enabled: true

replicaCount: 1

liveness:

# necessary to disable liveness probe when setting breakpoints

# in debugger so K8s doesn't restart unresponsive container

enabled: true

# so server configuration

config:

# message router configuration

dmaapTopic: "AUTO"

# openstack configuration

openStackUserName: "vnf_user"

openStackRegion: "RegionOne"

openStackKeyStoneUrl: "http://1.2.3.4:5000"

openStackServiceTenantName: "service"

openStackEncryptedPasswordHere: "c124921a3a0efbe579782cde8227681e"

# configure embedded mariadb

mariadb:

config:

mariadbRootPassword: password

uui:

enabled: false

vfc:

enabled: false

vid:

enabled: false

vnfsdk:

enabled: false

When deploying all of ONAP, the dependencies section of the Chart.yaml file controls which and what version of the ONAP components are included. Here is an excerpt of this file:

dependencies:

<...>

- name: so

version: ~10.0.0

repository: '@local'

condition: so.enabled

<...>

The ~ operator in the so version value indicates that the latest “10.X.X” version of so shall be used thus allowing the chart to allow for minor upgrades that don’t impact the so API; hence, version 10.0.1 will be installed in this case.

The onap/resources/environment/dev.yaml (see the excerpt below) enables for fine grained control on what components are included as part of this deployment. By changing this so line to enabled: false the so component will not be deployed. If this change is part of an upgrade the existing so component will be shut down. Other so parameters and even so child values can be modified, for example the so’s liveness probe could be disabled (which is not recommended as this change would disable auto-healing of so).

#################################################################

# Global configuration overrides.

#

# These overrides will affect all helm charts (ie. applications)

# that are listed below and are 'enabled'.

#################################################################

global:

<...>

#################################################################

# Enable/disable and configure helm charts (ie. applications)

# to customize the ONAP deployment.

#################################################################

aaf:

enabled: false

<...>

so: # Service Orchestrator

enabled: true

replicaCount: 1

liveness:

# necessary to disable liveness probe when setting breakpoints

# in debugger so K8s doesn't restart unresponsive container

enabled: true

<...>

Accessing the ONAP Portal using OOM and a Kubernetes Cluster

The ONAP deployment created by OOM operates in a private IP network that isn’t publicly accessible (i.e. OpenStack VMs with private internal network) which blocks access to the ONAP Portal. To enable direct access to this Portal from a user’s own environment (a laptop etc.) the portal application’s port 8989 is exposed through a Kubernetes LoadBalancer object.

Typically, to be able to access the Kubernetes nodes publicly a public address is assigned. In OpenStack this is a floating IP address.

When the portal-app chart is deployed a Kubernetes service is created that instantiates a load balancer. The LB chooses the private interface of one of the nodes as in the example below (10.0.0.4 is private to the K8s cluster only). Then to be able to access the portal on port 8989 from outside the K8s & OpenStack environment, the user needs to assign/get the floating IP address that corresponds to the private IP as follows:

> kubectl -n onap get services|grep "portal-app"

portal-app LoadBalancer 10.43.142.201 10.0.0.4 8989:30215/TCP,8006:30213/TCP,8010:30214/TCP 1d app=portal-app,release=dev

In this example, use the 10.0.0.4 private address as a key find the corresponding public address which in this example is 10.12.6.155. If you’re using OpenStack you’ll do the lookup with the horizon GUI or the OpenStack CLI for your tenant (openstack server list). That IP is then used in your /etc/hosts to map the fixed DNS aliases required by the ONAP Portal as shown below:

10.12.6.155 portal.api.simpledemo.onap.org

10.12.6.155 vid.api.simpledemo.onap.org

10.12.6.155 sdc.api.fe.simpledemo.onap.org

10.12.6.155 sdc.workflow.plugin.simpledemo.onap.org

10.12.6.155 sdc.dcae.plugin.simpledemo.onap.org

10.12.6.155 portal-sdk.simpledemo.onap.org

10.12.6.155 policy.api.simpledemo.onap.org

10.12.6.155 aai.api.sparky.simpledemo.onap.org

10.12.6.155 cli.api.simpledemo.onap.org

10.12.6.155 msb.api.discovery.simpledemo.onap.org

10.12.6.155 msb.api.simpledemo.onap.org

10.12.6.155 clamp.api.simpledemo.onap.org

10.12.6.155 so.api.simpledemo.onap.org

10.12.6.155 sdc.workflow.plugin.simpledemo.onap.org

Ensure you’ve disabled any proxy settings the browser you are using to access

the portal and then simply access now the new ssl-encrypted URL:

https://portal.api.simpledemo.onap.org:30225/ONAPPORTAL/login.htm

Note

Using the HTTPS based Portal URL the Browser needs to be configured to accept unsecure credentials. Additionally when opening an Application inside the Portal, the Browser might block the content, which requires to disable the blocking and reloading of the page

Note

Besides the ONAP Portal the Components can deliver additional user interfaces, please check the Component specific documentation.

Note

Kubernetes port forwarding was considered but discarded as it would require the end user to run a script that opens up port forwarding tunnels to each of the pods that provides a portal application widget.

Reverting to a VNC server similar to what was deployed in the Amsterdam release was also considered but there were many issues with resolution, lack of volume mount, /etc/hosts dynamic update, file upload that were a tall order to solve in time for the Beijing release.

Observations:

If you are not using floating IPs in your Kubernetes deployment and directly attaching a public IP address (i.e. by using your public provider network) to your K8S Node VMs’ network interface, then the output of ‘kubectl -n onap get services | grep “portal-app”’ will show your public IP instead of the private network’s IP. Therefore, you can grab this public IP directly (as compared to trying to find the floating IP first) and map this IP in /etc/hosts.

Monitor

All highly available systems include at least one facility to monitor the health of components within the system. Such health monitors are often used as inputs to distributed coordination systems (such as etcd, Zookeeper, or Consul) and monitoring systems (such as Nagios or Zabbix). OOM provides two mechanisms to monitor the real-time health of an ONAP deployment:

a Consul GUI for a human operator or downstream monitoring systems and Kubernetes liveness probes that enable automatic healing of failed containers, and

a set of liveness probes which feed into the Kubernetes manager which are described in the Heal section.

Within ONAP, Consul is the monitoring system of choice and deployed by OOM in two parts:

a three-way, centralized Consul server cluster is deployed as a highly available monitor of all of the ONAP components, and

a number of Consul agents.

The Consul server provides a user interface that allows a user to graphically view the current health status of all of the ONAP components for which agents have been created - a sample from the ONAP Integration labs follows:

To see the real-time health of a deployment go to: http://<kubernetes IP>:30270/ui/

where a GUI much like the following will be found:

Note

If Consul GUI is not accessible, you can refer this kubectl port-forward method to access an application

Heal

The ONAP deployment is defined by Helm charts as mentioned earlier. These Helm charts are also used to implement automatic recoverability of ONAP components when individual components fail. Once ONAP is deployed, a “liveness” probe starts checking the health of the components after a specified startup time.

Should a liveness probe indicate a failed container it will be terminated and a replacement will be started in its place - containers are ephemeral. Should the deployment specification indicate that there are one or more dependencies to this container or component (for example a dependency on a database) the dependency will be satisfied before the replacement container/component is started. This mechanism ensures that, after a failure, all of the ONAP components restart successfully.

To test healing, the following command can be used to delete a pod:

> kubectl delete pod [pod name] -n [pod namespace]

One could then use the following command to monitor the pods and observe the pod being terminated and the service being automatically healed with the creation of a replacement pod:

> kubectl get pods --all-namespaces -o=wide

Scale

Many of the ONAP components are horizontally scalable which allows them to adapt to expected offered load. During the Beijing release scaling is static, that is during deployment or upgrade a cluster size is defined and this cluster will be maintained even in the presence of faults. The parameter that controls the cluster size of a given component is found in the values.yaml file for that component. Here is an excerpt that shows this parameter:

# default number of instances

replicaCount: 1

In order to change the size of a cluster, an operator could use a helm upgrade (described in detail in the next section) as follows:

> helm upgrade [RELEASE] [CHART] [flags]

The RELEASE argument can be obtained from the following command:

> helm list

Below is the example for the same:

> helm list

NAME REVISION UPDATED STATUS CHART APP VERSION NAMESPACE

dev 1 Wed Oct 14 13:49:52 2020 DEPLOYED onap-10.0.0 Jakarta onap

dev-cassandra 5 Thu Oct 15 14:45:34 2020 DEPLOYED cassandra-10.0.0 onap

dev-contrib 1 Wed Oct 14 13:52:53 2020 DEPLOYED contrib-10.0.0 onap

dev-mariadb-galera 1 Wed Oct 14 13:55:56 2020 DEPLOYED mariadb-galera-10.0.0 onap

Here the Name column shows the RELEASE NAME, In our case we want to try the scale operation on cassandra, thus the RELEASE NAME would be dev-cassandra.

Now we need to obtain the chart name for cassandra. Use the below command to get the chart name:

> helm search cassandra

Below is the example for the same:

> helm search cassandra

NAME CHART VERSION APP VERSION DESCRIPTION

local/cassandra 10.0.0 ONAP cassandra

local/portal-cassandra 10.0.0 Portal cassandra

local/aaf-cass 10.0.0 ONAP AAF cassandra

local/sdc-cs 10.0.0 ONAP Service Design and Creation Cassandra

Here the Name column shows the chart name. As we want to try the scale operation for cassandra, thus the corresponding chart name is local/cassandra

Now we have both the command’s arguments, thus we can perform the scale operation for cassandra as follows:

> helm upgrade dev-cassandra local/cassandra --set replicaCount=3

Using this command we can scale up or scale down the cassandra db instances.

The ONAP components use Kubernetes provided facilities to build clustered, highly available systems including: Services with load-balancers, ReplicaSet, and StatefulSet. Some of the open-source projects used by the ONAP components directly support clustered configurations, for example ODL and MariaDB Galera.

The Kubernetes Services abstraction to provide a consistent access point for each of the ONAP components, independent of the pod or container architecture of that component. For example, SDN-C uses OpenDaylight clustering with a default cluster size of three but uses a Kubernetes service to and change the number of pods in this abstract this cluster from the other ONAP components such that the cluster could change size and this change is isolated from the other ONAP components by the load-balancer implemented in the ODL service abstraction.

A ReplicaSet is a construct that is used to describe the desired state of the cluster. For example ‘replicas: 3’ indicates to Kubernetes that a cluster of 3 instances is the desired state. Should one of the members of the cluster fail, a new member will be automatically started to replace it.

Some of the ONAP components many need a more deterministic deployment; for example to enable intra-cluster communication. For these applications the component can be deployed as a Kubernetes StatefulSet which will maintain a persistent identifier for the pods and thus a stable network id for the pods. For example: the pod names might be web-0, web-1, web-{N-1} for N ‘web’ pods with corresponding DNS entries such that intra service communication is simple even if the pods are physically distributed across multiple nodes. An example of how these capabilities can be used is described in the Running Consul on Kubernetes tutorial.

Upgrade

Helm has built-in capabilities to enable the upgrade of pods without causing a loss of the service being provided by that pod or pods (if configured as a cluster). As described in the OOM Developer’s Guide, ONAP components provide an abstracted ‘service’ end point with the pods or containers providing this service hidden from other ONAP components by a load balancer. This capability is used during upgrades to allow a pod with a new image to be added to the service before removing the pod with the old image. This ‘make before break’ capability ensures minimal downtime.

Prior to doing an upgrade, determine of the status of the deployed charts:

> helm list

NAME REVISION UPDATED STATUS CHART NAMESPACE

so 1 Mon Feb 5 10:05:22 2020 DEPLOYED so-10.0.0 onap

When upgrading a cluster a parameter controls the minimum size of the cluster during the upgrade while another parameter controls the maximum number of nodes in the cluster. For example, SNDC configured as a 3-way ODL cluster might require that during the upgrade no fewer than 2 pods are available at all times to provide service while no more than 5 pods are ever deployed across the two versions at any one time to avoid depleting the cluster of resources. In this scenario, the SDNC cluster would start with 3 old pods then Kubernetes may add a new pod (3 old, 1 new), delete one old (2 old, 1 new), add two new pods (2 old, 3 new) and finally delete the 2 old pods (3 new). During this sequence the constraints of the minimum of two pods and maximum of five would be maintained while providing service the whole time.

Initiation of an upgrade is triggered by changes in the Helm charts. For example, if the image specified for one of the pods in the SDNC deployment specification were to change (i.e. point to a new Docker image in the nexus3 repository - commonly through the change of a deployment variable), the sequence of events described in the previous paragraph would be initiated.

For example, to upgrade a container by changing configuration, specifically an environment value:

> helm upgrade so onap/so --version 8.0.1 --set enableDebug=true

Issuing this command will result in the appropriate container being stopped by Kubernetes and replaced with a new container with the new environment value.

To upgrade a component to a new version with a new configuration file enter:

> helm upgrade so onap/so --version 8.0.1 -f environments/demo.yaml

To fetch release history enter:

> helm history so

REVISION UPDATED STATUS CHART DESCRIPTION

1 Mon Feb 5 10:05:22 2020 SUPERSEDED so-9.0.0 Install complete

2 Mon Feb 5 10:10:55 2020 DEPLOYED so-10.0.0 Upgrade complete

Unfortunately, not all upgrades are successful. In recognition of this the lineup of pods within an ONAP deployment is tagged such that an administrator may force the ONAP deployment back to the previously tagged configuration or to a specific configuration, say to jump back two steps if an incompatibility between two ONAP components is discovered after the two individual upgrades succeeded.

This rollback functionality gives the administrator confidence that in the unfortunate circumstance of a failed upgrade the system can be rapidly brought back to a known good state. This process of rolling upgrades while under service is illustrated in this short YouTube video showing a Zero Downtime Upgrade of a web application while under a 10 million transaction per second load.

For example, to roll-back back to previous system revision enter:

> helm rollback so 1

> helm history so

REVISION UPDATED STATUS CHART DESCRIPTION

1 Mon Feb 5 10:05:22 2020 SUPERSEDED so-9.0.0 Install complete

2 Mon Feb 5 10:10:55 2020 SUPERSEDED so-10.0.0 Upgrade complete

3 Mon Feb 5 10:14:32 2020 DEPLOYED so-9.0.0 Rollback to 1

Note

The description field can be overridden to document actions taken or include tracking numbers.

Many of the ONAP components contain their own databases which are used to record configuration or state information. The schemas of these databases may change from version to version in such a way that data stored within the database needs to be migrated between versions. If such a migration script is available it can be invoked during the upgrade (or rollback) by Container Lifecycle Hooks. Two such hooks are available, PostStart and PreStop, which containers can access by registering a handler against one or both. Note that it is the responsibility of the ONAP component owners to implement the hook handlers - which could be a shell script or a call to a specific container HTTP endpoint - following the guidelines listed on the Kubernetes site. Lifecycle hooks are not restricted to database migration or even upgrades but can be used anywhere specific operations need to be taken during lifecycle operations.

OOM uses Helm K8S package manager to deploy ONAP components. Each component is arranged in a packaging format called a chart - a collection of files that describe a set of k8s resources. Helm allows for rolling upgrades of the ONAP component deployed. To upgrade a component Helm release you will need an updated Helm chart. The chart might have modified, deleted or added values, deployment yamls, and more. To get the release name use:

> helm ls

To easily upgrade the release use:

> helm upgrade [RELEASE] [CHART]

To roll back to a previous release version use:

> helm rollback [flags] [RELEASE] [REVISION]

For example, to upgrade the onap-so helm release to the latest SO container release v1.1.2:

Edit so values.yaml which is part of the chart

Change “so: nexus3.onap.org:10001/openecomp/so:v1.1.1” to “so: nexus3.onap.org:10001/openecomp/so:v1.1.2”

From the chart location run:

> helm upgrade onap-so

The previous so pod will be terminated and a new so pod with an updated so container will be created.

Delete

Existing deployments can be partially or fully removed once they are no longer needed. To minimize errors it is recommended that before deleting components from a running deployment the operator perform a ‘dry-run’ to display exactly what will happen with a given command prior to actually deleting anything. For example:

> helm undeploy onap --dry-run

will display the outcome of deleting the ‘onap’ release from the deployment. To completely delete a release and remove it from the internal store enter:

> helm undeploy onap

Once complete undeploy is done then delete the namespace as well using following command:

> kubectl delete namespace <name of namespace>

Note

You need to provide the namespace name which you used during deployment, below is the example:

> kubectl delete namespace onap

One can also remove individual components from a deployment by changing the ONAP configuration values. For example, to remove so from a running deployment enter:

> helm undeploy onap-so

will remove so as the configuration indicates it’s no longer part of the deployment. This might be useful if a one wanted to replace just so by installing a custom version.